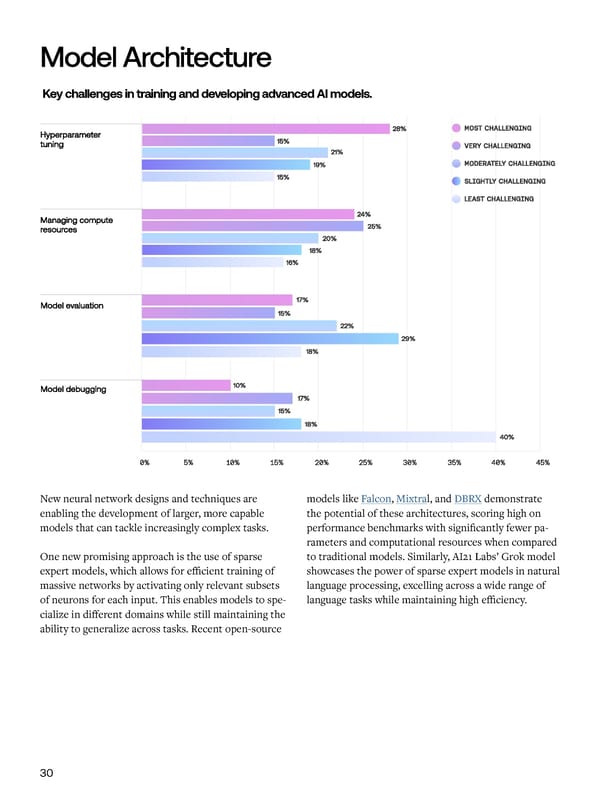

Model Architecture Key challenges in training and developing advanced AI models. Comput tion l Resources Trends Demand for compute continues to grow, with model performance for AI tasks, they also require a different training requiring huge clusters of specialized accelera- programming model, tooling ecosystem, and set tors like GPUs and TPUs. However, the industry is un- of optimization techniques compared to tradition- dergoing a significant shift away from traditional CPUs al CPU-based workloads. Further, large models are towards these accelerator architectures optimized for usually trained across many accelerators and distribut- AI workloads. This transition brings significant chal- ed across many machines in parallel, requiring complex lenges in terms of infrastructure, tooling, and resource orchestration frameworks. management. New neural network designs and techniques are models like Falcon, Mixtral, and DBRX demonstrate To address these challenges, PyTorch introduced the enabling the development of larger, more capable the potential of these architectures, scoring high on The survey highlights the magnitude of this shift, with Fully Shared Data Parallel (FSDP). FSDP is a data models that can tackle increasingly complex tasks. performance benchmarks with significantly fewer pa- over 48% of respondents rating compute resource man- parallelism paradigm that shards model parameters, rameters and computational resources when compared agement as “most challenging” or “very challenging”. gradients, and optimizer states across data-parallel One new promising approach is the use of sparse to traditional models. Similarly, AI21 Labs’ Grok model workers, enabling more efficient memory usage and expert models, which allows for efficient training of showcases the power of sparse expert models in natural “CPUs consume about 80% of IT workloads today. GPUs training of larger models. massive networks by activating only relevant subsets language processing, excelling across a wide range of consume about 20%. That’s going to flip in the short term, of neurons for each input. This enables models to spe- language tasks while maintaining high efficiency. meaning 3 to 5 years. Many industry leaders that I’ve talked In addition to the challenge of compute resource cialize in different domains while still maintaining the to at Google and elsewhere believe that in 3 to 5 years, 80% management, model builders also face obstacles due ability to generalize across tasks. Recent open-source of IT workloads will be running on some type of architec- to a lack of suitable tools and frameworks. 38% of ture that is not CPU, but rather some type of chip architec- respondents indicated that the absence of AI-spe- ture like a GPU.” cific libraries, frameworks, and platforms is a major - Jon Barker, Customer Engineer, Google challenge holding back their AI projects. These tools are crucial for abstracting away the complexities of This rapid transition towards more costly GPU and distributed computing and accelerator programming, TPU-centric workloads presents a number of chal- allowing researchers to focus on model development lenges. While these accelerators offer unparalleled and experimentation. 30 31

AI Readiness Report 2024 Page 31 Page 33

AI Readiness Report 2024 Page 31 Page 33